[IROS24] PreAfford: Universal Affordance-Based Pre-Grasping for Diverse Objects and Environments

Abstract

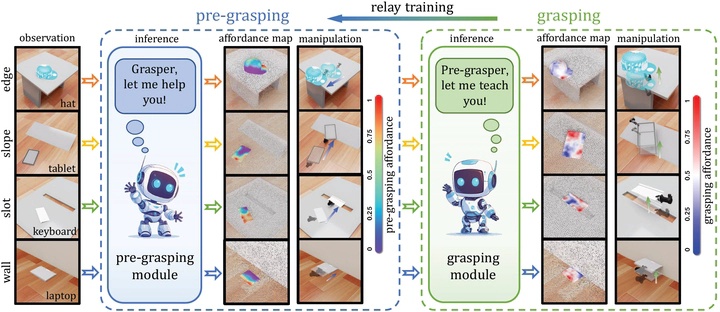

Robotic manipulation with two-finger grippers is challenged by objects lacking distinct graspable features. Traditional pre-grasping methods, which typically involve repositioning objects or utilizing external aids like table edges, are limited in their adaptability across different object categories and environments. To overcome these limitations, we introduce PreAfford, a novel pre-grasping planning framework incorporating a point-level affordance representation and a relay training approach. Our method significantly improves adaptability, allowing effective manipulation across a wide range of environments and object types. When evaluated on the ShapeNet-v2 dataset, PreAfford not only enhances grasping success rates by 69% but also demonstrates its practicality through successful real-world experiments. These improvements highlight PreAfford’s potential to redefine standards for robotic handling of complex manipulation tasks in diverse settings.