[CogSci26] Grounding Before Generalizing: How AI Differs from Humans in Causal Transfer

Abstract

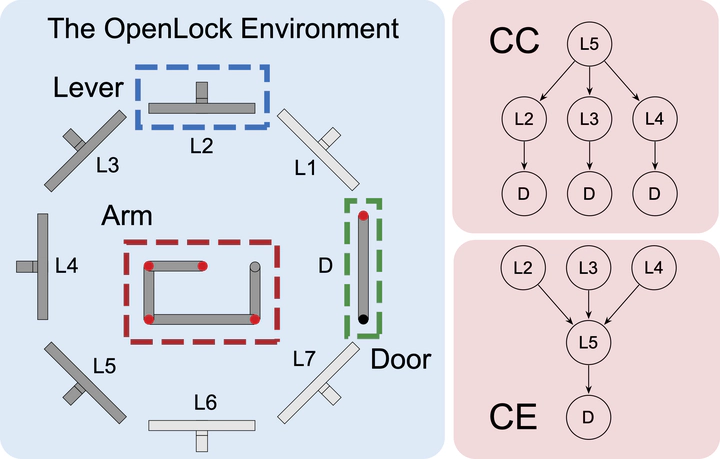

Extracting abstract causal structures and applying them to novel situations is a hallmark of human intelligence (Griffiths & Tenenbaum, 2005; Holyoak & Cheng, 2011; Lake et al., 2017). While Large Language Models (LLMs) and Vision Language Models (VLMs) have shown strong performance on a wide range of reasoning tasks (Brown et al., 2020; Xu et al., 2025), their capacity for interactive causal learning—inducing latent structures through sequential exploration and transferring them across contexts—remains uncharacterized. Human learners accomplish such transfer after minimal exposure, whereas classical Reinforcement Learning (RL) agents fail catastrophically (Edmonds et al., 2018). Whether state-of-the-art Artificial Intelligence (AI) models possess human-like mechanisms for abstract causal structure transfer is an open question. Using the OpenLock paradigm (Edmonds et al., 2018) requiring sequential discovery of Common Cause (CC) and Common Effect (CE) structures, here we show that models exhibit fundamentally delayed or absent transfer: even successful models require initial environmental-specific mapping—what we term environmental grounding—before efficiency gains emerge, whereas humans leverage prior structural knowledge from the very first solution attempt. In the text-only condition, models matched or exceeded human discovery efficiency. In contrast, visual information—in both the image-only and text-and-image conditions—overall degraded rather than enhanced performance, revealing a broad reliance on symbolic processing rather than integrated multimodal reasoning. Models further exhibited systematic CC / CE asymmetries absent in humans, suggesting heuristic biases rather than direction-neutral causal abstraction. These findings reveal that large-scale statistical learning does not produce the decontextualized causal schemas underpinning human analogical reasoning, establishing grounding-dependent transfer as a fundamental limitation of current LLMs and VLMs.