Abstract

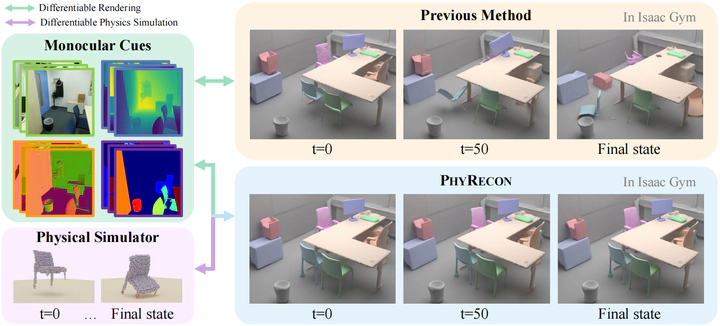

Neural implicit representations have gained popularity in multi-view 3D reconstruction. However, most previous work struggles to yield physically plausible results, limiting their utility in domains requiring rigorous physical accuracy, such as embodied AI and robotics. This lack of plausibility stems from the absence of physics modeling in existing methods and their inability to recover intricate geometrical structures. In this paper, we introduce PhyRecon, the first approach to leverage both differentiable rendering and differentiable physics simulation to learn implicit surface representations. PhyRecon features a novel differentiable particle-based physical simulator built on neural implicit representations. Central to this design is an efficient transformation between SDF-based implicit representations and explicit surface points via our proposed Surface Points Marching Cubes (SP-MC), enabling differentiable learning with both rendering and physical losses. Additionally, PhyRecon models both rendering and physical uncertainty to identify and compensate for inconsistent and inaccurate monocular geometric priors. This physical uncertainty further facilitates a novel physics-guided pixel sampling to enhance the learning of slender structures. By integrating these techniques, our model supports differentiable joint modeling of appearance, geometry, and physics. Extensive experiments demonstrate that PhyRecon significantly outperforms all state-of-the-art methods. Our results also exhibit superior physical stability in physical simulators, with at least a 40% improvement across all datasets, paving the way for future physics-based applications.